Let’s begin with a staggering statistic: Based on McKinsey, generative AI, or GenAI, will add someplace between $2.6T and $4.4T per yr to world financial output, with enterprises on the forefront. Whether or not you’re a producer seeking to optimize your world provide chain, a hospital that’s analyzing affected person knowledge to counsel personalised therapy plans, or a monetary companies firm wanting to enhance fraud detection—AI might maintain the keys to your group to unlock new ranges of effectivity, perception, and worth creation.

Most of the CIOs and expertise leaders we discuss to at present acknowledge this. In truth, most say that their organizations are planning full GenAI adoption inside the subsequent two years. But in response to the Cisco AI Readiness Index, solely 14% of organizations report that their infrastructures are prepared for AI at present. What’s extra, a staggering 85% of AI tasks stall or are disrupted as soon as they’ve began.

The rationale? There’s a excessive barrier to entry. It will possibly require a company to fully overhaul infrastructure to fulfill the calls for of particular AI use instances, construct the skillsets wanted to develop and help AI, and cope with the extra value and complexity of securing and managing these new workloads.

We consider there’s a neater path ahead. That’s why we’re excited to introduce a robust lineup of merchandise and options for data- and performance-intensive use instances like giant language mannequin coaching, fine-tuning, and inferencing for GenAI. Many of those new additions to Cisco’s AI infrastructure portfolio are being introduced at Cisco Accomplice Summit and will be ordered at present.

These bulletins deal with the excellent infrastructure necessities that enterprises have throughout the AI lifecycle, from constructing and coaching refined fashions to widespread use for inferencing. Let’s stroll by how that might work with the brand new merchandise we’re introducing.

Accelerated Compute

A typical AI journey begins with coaching GenAI fashions with giant quantities of knowledge to construct the mannequin intelligence. For this essential stage, the brand new Cisco UCS C885A M8 Server is a powerhouse designed to sort out essentially the most demanding AI coaching duties. With its high-density configuration of NVIDIA H100 and H200 Tensor Core GPUs, coupled with the effectivity of NVIDIA HGX structure and AMD EPYC processors, UCS C885A M8 supplies the uncooked computational energy needed for dealing with large knowledge units and complicated algorithms. Furthermore, its simplified deployment and streamlined administration makes it simpler than ever for enterprise clients to embrace AI.

Scalable Community Material for AI Connectivity

To coach GenAI fashions, clusters of those highly effective servers usually work in unison, producing an immense stream of knowledge that necessitates a community cloth able to dealing with excessive bandwidth with minimal latency. That is the place the newly launched Cisco Nexus 9364E-SG2 Swap shines. Its high-density 800G aggregation ensures easy knowledge stream between servers, whereas superior congestion administration and huge buffer sizes reduce packet drops—preserving latency low and coaching efficiency excessive. The Nexus 9364E-SG2 serves as a cornerstone for a extremely scalable community infrastructure, permitting AI clusters to increase seamlessly as organizational wants develop.

Buying Simplicity

As soon as these highly effective fashions are educated, you want infrastructure deployed for inferencing to offer precise worth, usually throughout a distributed panorama of knowledge facilities and edge places. We have now enormously simplified this course of with new Cisco AI PODs that speed up deployment of all the AI infrastructure stack itself. Regardless of the place you fall on the spectrum of use instances talked about firstly of this weblog, AI PODs are designed to supply a plug-and-play expertise with NVIDIA accelerated computing. The pre-sized and pre-validated bundles of infrastructure get rid of the guesswork from deploying edge inferencing, large-scale clusters, and different AI inferencing options, with extra use instances deliberate for launch over the following few months.

Our objective is to allow clients to confidently deploy AI PODs with predictability round efficiency, scalability, value, and outcomes, whereas shortening time to production-ready inferencing with a full stack of infrastructure, software program, and AI toolsets. AI PODs embrace NVIDIA AI Enterprise, an end-to-end, cloud-native software program platform that accelerates knowledge science pipelines and streamlines AI improvement and deployment. Managed by Cisco Intersight, AI PODs present centralized management and automation, simplifying every little thing from configuration to day-to-day operations, with extra use instances to come back.

Cloud Deployed and Cloud Managed

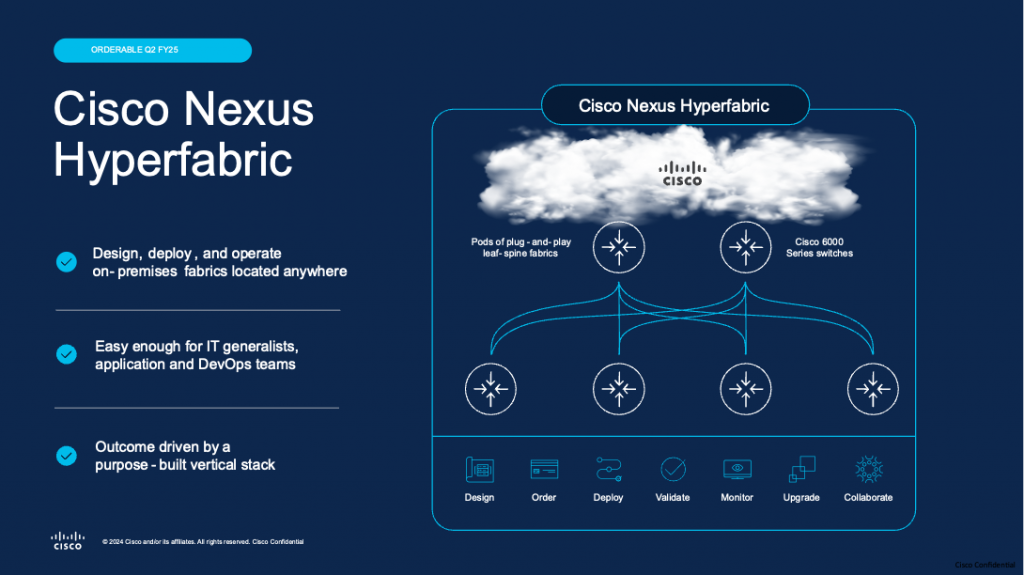

To assist organizations modernize their knowledge middle operations and allow AI use instances, we additional simplify infrastructure deployment and administration with Cisco Nexus Hyperfabric, a fabric-as-a-service answer introduced earlier this yr at Cisco Reside. Cisco Nexus Hyperfabric incorporates a cloud-managed controller that simplifies the design, deployment, and administration of the community cloth for constant efficiency and operational ease. The hardware-accelerated efficiency of Cisco Nexus Hyperfabric, with its inherent excessive bandwidth and low latency, optimizes AI inferencing, enabling quick response instances and environment friendly useful resource utilization for demanding, real-time AI purposes. Moreover, Cisco Nexus Hyperfabric’s complete monitoring and analytics capabilities present real-time visibility into community efficiency, permitting for proactive challenge identification and backbone to take care of a easy and dependable inferencing setting.

By offering a seamless continuum of options, from highly effective coaching servers and high-performance networking to simplified inference deployments, we’re enabling enterprises to speed up their AI initiatives, unlock the total potential of their knowledge, and drive significant innovation.

Availability Info and Extra

The Cisco UCS C885A M8 Server is now orderable and is anticipated to ship to clients by the top of this yr. The Cisco AI PODs shall be orderable in November. The Cisco Nexus 9364E-SG2 Swap shall be orderable in January 2025 with availability to start Q1 calendar yr 2025. Cisco Nexus Hyperfabric shall be out there for buy in January 2025 with 30+ licensed companions. Hyperfabric AI shall be out there in Could and can embrace a plug-and-play AI answer inclusive of Cisco UCS servers (with embedded NVIDIA accelerated computing and AI software program), and elective VAST storage.

For extra details about these merchandise, please go to:

In case you are attending the Cisco Accomplice Summit this week, please go to the answer showcase to see the Cisco UCS C885A M8 Server and Cisco Nexus 9364E-SG2 Swap. You too can attend the enterprise insights session BIS08 entitled “Revolutionize tomorrow: Unleash innovation by the ability of AI-ready infrastructure” for extra particulars on the merchandise and options introduced.

Share: